AI Overviews CTR: 61% drop, signs of recovery, or both?

Three contradictory CTR studies on AI Overviews dropped in seven days. Here's what the data actually says — and why platforms keep changing the answer.

Three CTR studies on Google's AI Overviews landed in the past seven days. They don't agree.

One says CTR fell 61%. One says clicks didn't collapse. One says CTR is showing early signs of recovery. The headlines have already done the rounds in client Slacks, and we've spent the week answering the same question: which one is right?

Short answer: all of them, partially. Longer answer is more useful — because the

contradictions tell you something about the system you're trying to optimise for.

What the three studies actually measured

The 61% figure tracks click-through rate from desktop SERPs in a sample of mid-funnel queries where an AI Overview is present, compared to the same queries before AI Overviews rolled out broadly. It's a like-for-like CTR comparison on the same query set.

The "clicks didn't collapse" piece looks at total organic clicks across a broader query mix, not CTR. Total clicks are roughly flat because total search volume has grown — informational queries that used to live on Google now also flow through ChatGPT and Perplexity, and what's left on Google still produces traffic, just with lower per-query CTR.

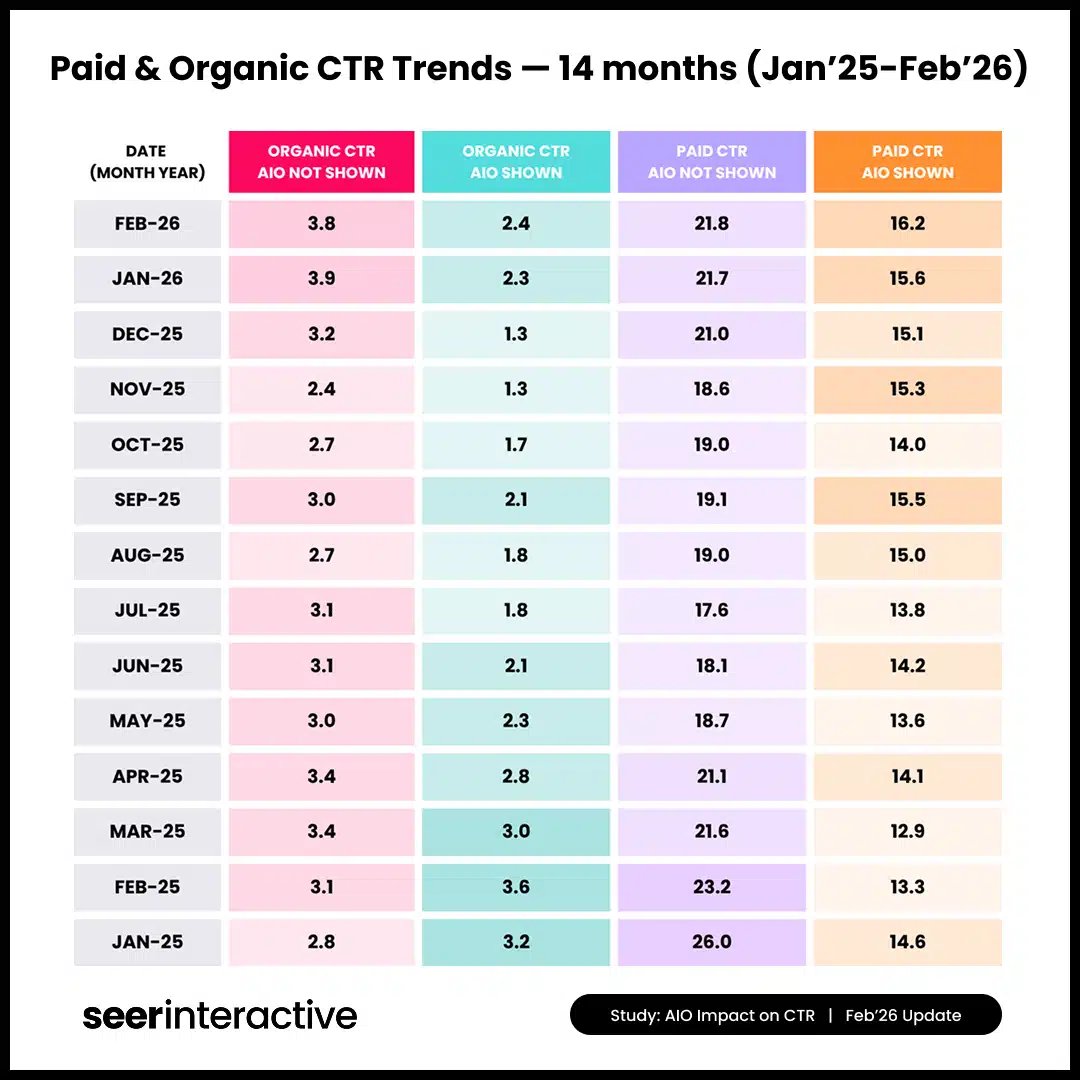

The "early signs of recovery" study looks at the trend over the last 90 days. CTR on AI Overview queries dropped sharply when the format expanded last year, but Google has been adjusting how source citations render — bigger thumbnails on some result types, more prominent citation chips on others — and the per-query CTR is creeping back up from the floor.

So all three are accurate. They're measuring different things, on different query mixes, over different windows.

Why platforms keep changing the answer

This is the bit worth understanding, because it's not going to stop.

AI Overviews are an experiment Google is still running on its own users. The format, the citation style, the query types it triggers on, the prominence of the source links — all of it has been tweaked, often quietly, since launch. That's not unique to Google. ChatGPT search citations have been redesigned twice this year. Perplexity's source-card rendering has changed at least three times.

Every change shifts the CTR curve. A study that pulls data in March and one that pulls data in April aren't measuring the same product, even if they call it the same thing. Add in the fact that each researcher uses a different keyword universe (commercial vs. informational, branded vs. non-branded, US vs. UK), and the studies will keep looking like they contradict each other.

This is the natural state of play when a platform is still trying to learn what users actually want. Google doesn't know yet whether users prefer two source links or six, whether thumbnails increase trust or distract, whether AI Overviews on commercial queries help or annoy. So they keep moving things, and the data keeps moving with them.

The mistake is treating any single CTR figure as a stable benchmark. There isn't one yet. There may not be one for another twelve months.

What this means for UK marketers

Three things, in order of urgency.

1. Stop reporting AI Overview CTR as a single number to leadership. It will drift, and you'll be explaining the drift more than you're explaining the underlying performance. Report a directional read ("CTR on AI Overview queries is materially lower than non-AI queries; we're tracking the gap monthly") rather than a fixed percentage that anchors expectations.

2. Segment your reporting by query intent. AI Overviews behave very differently on "what is X" queries than on "best X for Y" queries than on "X near me" queries. If you're looking at a single CTR average, you're hiding the dynamics. Pull GSC data with AI Overview presence flagged where you can, and split CTR by intent group.

3. Treat citation visibility as a separate metric from clicks. A query where you're cited in the AI Overview but the user doesn't click is not a wasted impression — it's a brand mention. We've started reporting "AI Overview citations" alongside CTR for clients in considered-purchase categories, because brand recall matters even when the click doesn't happen.

What to do this week

- Audit your last 90 days of GSC data for the queries you care about, and look at the CTR trend, not the absolute level. If it's stabilised, you're past the worst.

- Pick three commercial queries and three informational queries you rank for. Run each one in an incognito window from a UK IP. See whether you're cited in the Overview, and whether the citation is prominent enough to attract a click.

- If you're a client-side marketer, replace any "AI Overview CTR is X%" line in your reporting with a trend-based read until the platform settles.

The single most useful thing you can do is internalise that the answer will change again next

quarter. If your reporting can absorb that without rewriting itself, you're set up for the next two

years of search.

FAQ

Will AI Overview CTR ever stabilise?

Probably, but not soon. Expect at least another twelve months of formatting changes as Google tunes citation prominence and Overview triggering criteria.

Should I deoptimise for AI Overview queries?

No. The queries are still happening, and being cited in an AI Overview is a brand signal even

when the click doesn't follow. The right response is to track citations separately, not to retreat

from the queries.

23.png)